Supermodel

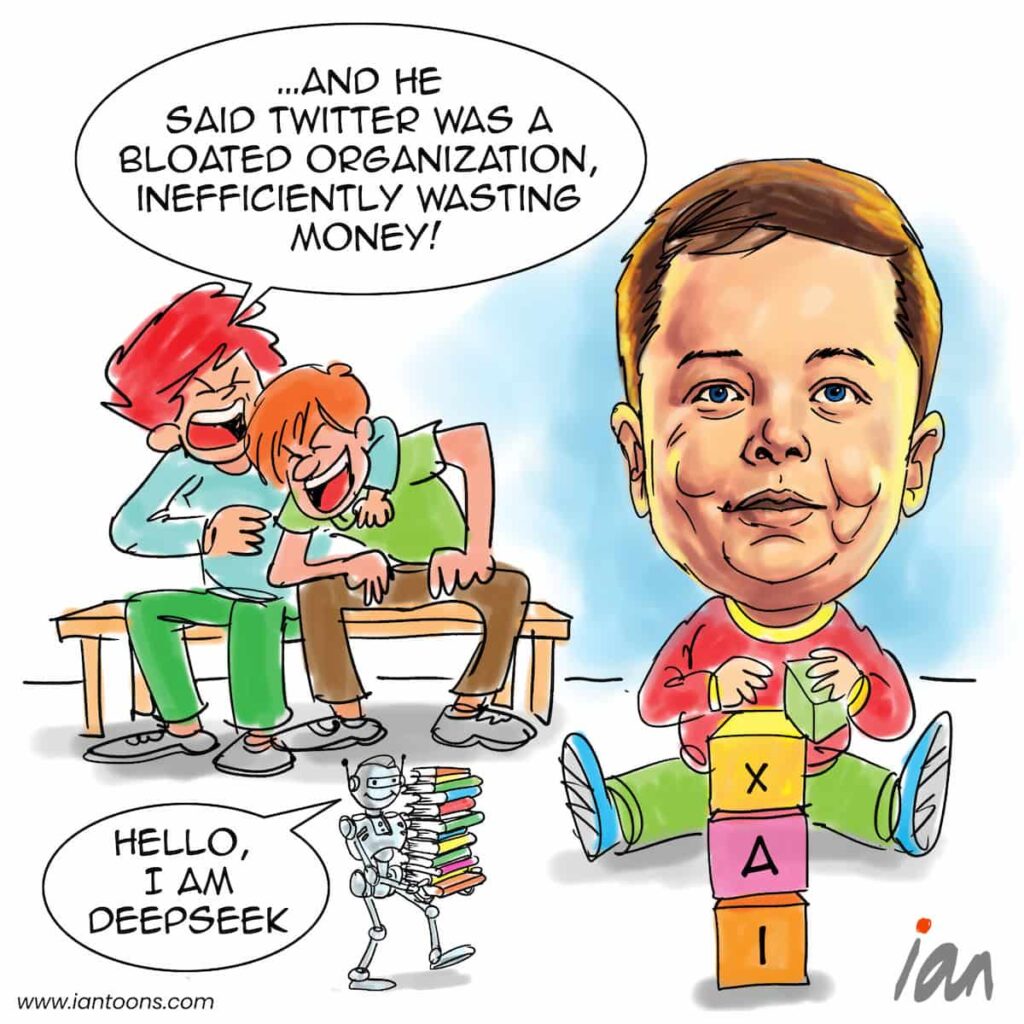

“Supermodel” – a cartoon that illustrates how DeepSeek’s announcement of more efficient AI model creation is good news for consumers.

In August 2024, Elon Musk announced he had secured $640 M to build the Colossus cluster, which xAI uses to train its AI models.

This cluster initially planned to house 100,000 Nvidia GPUs, with plans announced by October to double that amount.

By December, Elon, referencing Dr Evil from the Austin Powers films, said: “Nope, at least 1 biiillioon GPUs!”

Despite a cost of around $25,000 per GPU, Elon and many other companies in the US and Europe had little concern to raise the capital, for example Sam Altman was pursuing investors, including the U.A.E., for a project possibly requiring up to $7 TN.

Meanwhile, on another planet there existed equally ambitious entrepreneurs who had to innovate in efficiency to get ahead because of U.S. restrictions on GPU hardware availability.

Necessity required a focus on the reduction of communication overhead, both between GPU nodes and within nodes, resulting in a ‘training’ model cost of under $6M.

DeepSeek’s hardware optimizations should mean all the capital raised by these AI model companies can focus on increasing the pace of applications to benefit consumers, rather than pouring money into GPU hardware and energy projects to manage sprawling data centers.

19

5

2

Sources:

Kylie Robison and Elizabeth Lopatto (Jan 28, 2025) – Why everyone is freaking out about DeepSeek. – The Verge

Justine Calma (Jan 31, 2025) – AI is ‘an energy hog,’ but DeepSeek could change that – The Verge

Jane Zhang (Feb 2, 2025) – 3 reasons I plan to switch to DeepSeek as an AI startup founder – Business Insider